6 min read

Windows supports the digitally signing of EXEs and other application files so that you can verify the provenance of software before it executes on your system. This is an important element in the defense against malware. When a software publisher like Adobe signs their application they use the private key associated with a certificate they’ve obtained from one of the major certification authorities like Verisign.

Later, when you attempt to run a program, Windows can check the file’s signature and verify that it was signed by Adobe and that its bits haven’t been tampered with such as by the insertion of malicious code.

Windows doesn’t enforce digital signatures or limit which publisher’s programs can execute by default, but you can enable that with AppLocker. As powerful as AppLocker potentially is, it is also complicated to set up, except for environments with a very limited and standardized set of applications. You must create rules for at least every publisher whose code runs on your system.

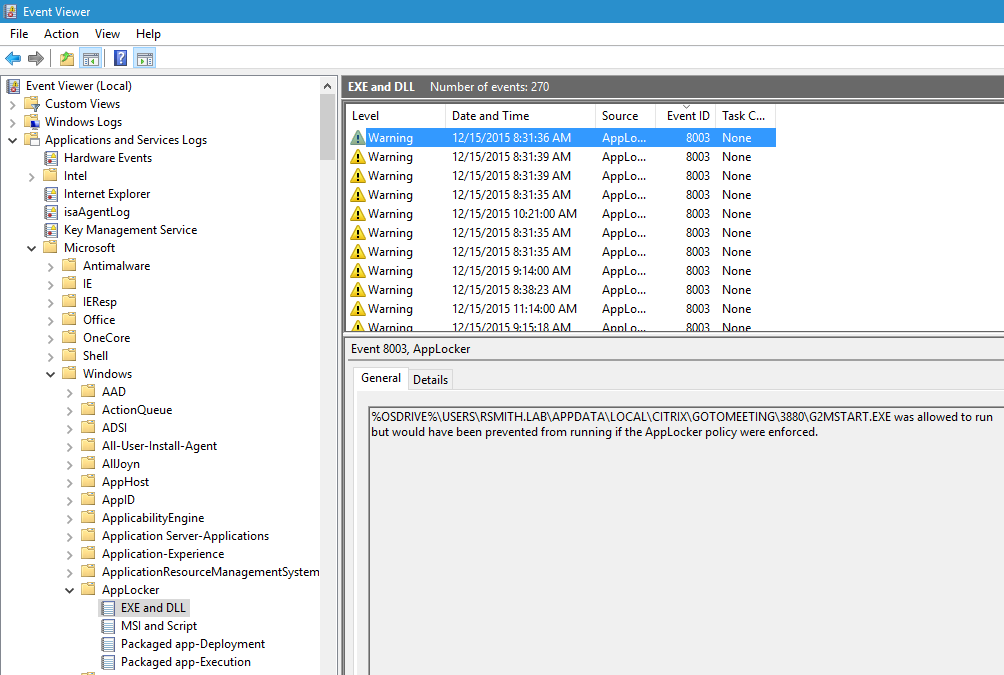

The good news, however, is that AppLocker can also be activated in audit mode. And you can quickly set up a base set of allow rules by having AppLocker scan a sample system. The idea with running AppLocker in audit mode is that you then monitor the AppLocker event log for warnings about programs that failed to match any of the allow rules. This means the program has an invalid signature, was signed by a publisher you don’t trust or isn’t signed at all. The events you look for are 8003, 8006, 8021 and 8024 and these events are in the logs under AppLocker as shown here:

If you are going to use AppLocker in audit mode for detecting untrusted software remember that Windows logs these events on each local system. So be sure you are using a SIEM with an efficient agent, like EventTracker, to collect these events or use Windows Event Forwarding.

Better yet, if you have EventTracker, don’t bother with AppLocker – use EventTracker’s automatic Digital Forensics Incident and Incident Response feature for unknown processes. EventTracker watches each process (and soon each DLL) that your endpoints load and checks the EXE’s hash against your environment’s local whitelist (which EventTracker can automatically build). If not found there, EventTracker checks it against the National Software Reference Library. If the EXE still isn’t found to be legit, EventTracker posts it to the dashboard for you to review. EventTracker automatically provides publisher information if the file is signed, and other forensics such as the endpoint, user and parent process. With one click you can check the process against anti-malware sites such as VirusTotal. EventTracker goes way beyond AppLocker in its ability to detect suspicious software and giving the tools and information to quickly determine if the program is a risk or not, including the use of digital signatures.

There are some other issues to be aware of, though, with digitally signed applications and certificates. Certificates are part of a very complicated technology called Public Key Infrastructure (PKI). PKI has so many components and ties together so many different parties there is unfortunately a lot of room for error. Here’s a brief list of what has gone wrong in the past year or so with signed applications and the PKI that signatures depend on:

- Compromised code-signing server: I’d said earlier that code signing allows you to make sure a program really came from the publisher and that it hasn’t been modified (tampered). But it depends on how well the publisher protects their private key. And unfortunately Adobe is a case in point. A while back some bad guys broke into Adobe’s network and eventually found their way to the very server Adobe uses to sign applications like Acrobat. They uploaded their own malware and signed it with Adobe’s code signing certificate’s private key and then proceeded to deploy that malware to target systems that graciously ran the program as a trusted Adobe application. How do you protect against publishers that get hacked? There’s only so much you can do. You can create stricter rules that limit execution to specific versions of known applications but of course that makes your policy much more fragile.

- Fraudulently obtained certificates: Everything in PKI depends on the Certification Authority only issuing certificates after rigorously verifying the party purchasing the certificate is really who they say they are. This doesn’t always work. A pretty recent example is Spymel a piece of malware signed by a certificate DigiCert issued to a company called SBO Invest. What can you do here? Well, using something like AppLocker to limit software to known publishers does help in this case. Of course if the CA itself is hacked then you can’t trust any certificate issued by it. And that brings us to the next point.

- Untrustworthy CAs: I’ve always been amazed at all the CA Windows trusts out of the box. It’s better than it used to be but at one time I remember that my Windows 2000 system automatically trusted certificates issued by some government agency of Peru. But you don’t have trust every CA Microsoft does. Trusted CAs are defined in the Trusted Root CAs store in the Certificates MMC snap-in and you can control the contents of this store centrally via group policy

- Insecure CAs from PC Vendors: Late last year Dell made the headlines when it was discovered that they were shipping PCs with their own CA’s certificate in the Trusted Root store. This was so that drivers and other files signed by Dell would be trusted. That might have been OK, but they mistakenly broke The Number One Rule in PKI. They failed to keep the private key private. That’s bad with any certificate let alone a CA’s root certificate. Specifically, Dell included the private key with the certificate. That allowed anyone that bought an affected Dell PC to sign their own custom malware with Dell’s private key and then once deployed on other affected Dell systems to run it with impunity since it appeared to be legit and from Dell.

So, certificates and code signing are far from perfect — show me any security control that is. I really encourage you to try out AppLocker in audit mode and monitor the warnings it produces. You won’t break any user experience, the performance impact is hardly measurable and if you are monitoring those warnings you might just detect some malware the first time it executes instead of the 6 months or so that it takes on average.